共计 22773 个字符,预计需要花费 57 分钟才能阅读完成。

Hadoop 的 MapReduce 环境是一个复杂的编程环境,所以我们要尽可能地简化构建 MapReduce 项目的过程。Maven 是一个很不错的自动化项目构建工具,通过 Maven 来帮助我们从复杂的环境配置中解脱出来,从而标准化开发过程。所以,写 MapReduce 之前,让我们先花点时间把刀磨快!!当然,除了 Maven 还有其他的选择 Gradle(推荐), Ivy 等

1. Maven介绍

Apache Maven,是一个 Java 的项目管理及自动构建工具,由 Apache 软件基金会所提供。基于项目对象模型(缩写:POM)概念,Maven 利用一个中央信息片断能管理一个项目的构建、报告和文档等步骤。曾是 Jakarta 项目的子项目,现为独立 Apache 项目。

maven 的开发者在他们开发网站上指出,maven 的目标是要使得项目的构建更加容易,它把编译、打包、测试、发布等开发过程中的不同环节有机的串联了起来,并产生一致的、高质量的项目信息,使得项目成员能够及时地得到反馈。maven 有效地支持了测试优先、持续集成,体现了鼓励沟通,及时反馈的软件开发理念。如果说 Ant 的复用是建立在”拷贝–粘贴”的基础上的,那么 Maven 通过插件的机制实现了项目构建逻辑的真正复用。

开发环境:

Win2008 64bit

Java-1.6.0_30

Maven-3.1.1

Hadoop-1.2.1

Eclipse Juno Service Release 2

2. Maven安装(win)

1) 下载 Maven:http://maven.apache.org/download.cgi

下载最新的 xxx-bin.zip 文件,在 win 上解压到下载最新的 xxx-bin.zip 文件:

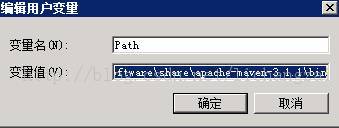

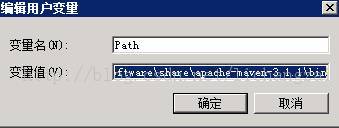

2) 设置环境变量

在 win 上解压到E:\software\share\apache-maven-3.1.1

并把 maven/bin 目录设置在环境变量PATH:

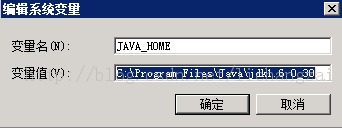

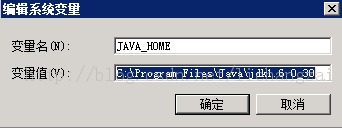

设置添加 JAVA_HOME 系变量:

3) 运行测试

然后,打开命令行输入 mvn,会看到mvn 命令的运行效果

C:\Users\Administrator>MVN

[INFO]Scanning for projects…

[INFO]————————————————————————

[INFO]BUILD FAILURE

[INFO]————————————————————————

[INFO]Total time: 0.208s

[INFO]Finished at: Thu Dec 12 11:06:32 CST 2013

[INFO]Final Memory: 4M/490M

[INFO]————————————————————————

[ERROR]No goals have been specified for this build. You must specify a valid li

fecyclephase or a goal in the format <plugin-prefix>:<goal> or<plugin-group-id

>:<plugin-artifact-id>[:<plugin-version>]:<goal>.Available lifecycle phases are

:validate, initialize, generate-sources, process-sources, generate-resources, p

rocess-resources,compile, process-classes, generate-test-sources, process-test-

sources,generate-test-resources, process-test-resources, test-compile, process-

test-classes,test, prepare-package, package, pre-integration-test, integration-

test,post-integration-test, verify, install, deploy, pre-clean, clean, post-cle

an,pre-site, site, post-site, site-deploy. -> [Help 1]

[ERROR]

[ERROR]To see the full stack trace of the errors, re-run Maven with the -e swit

ch.

[ERROR]Re-run Maven using the -X switch to enable full debug logging.

[ERROR]

[ERROR]For more information about the errors and possible solutions, please rea

dthe following articles:

[ERROR][Help 1] http://cwiki.apache.org/confluence/display/MAVEN/NoGoalSpecifie

dException

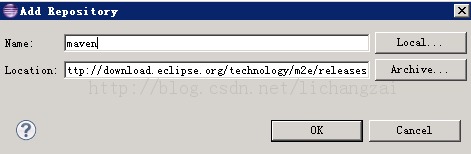

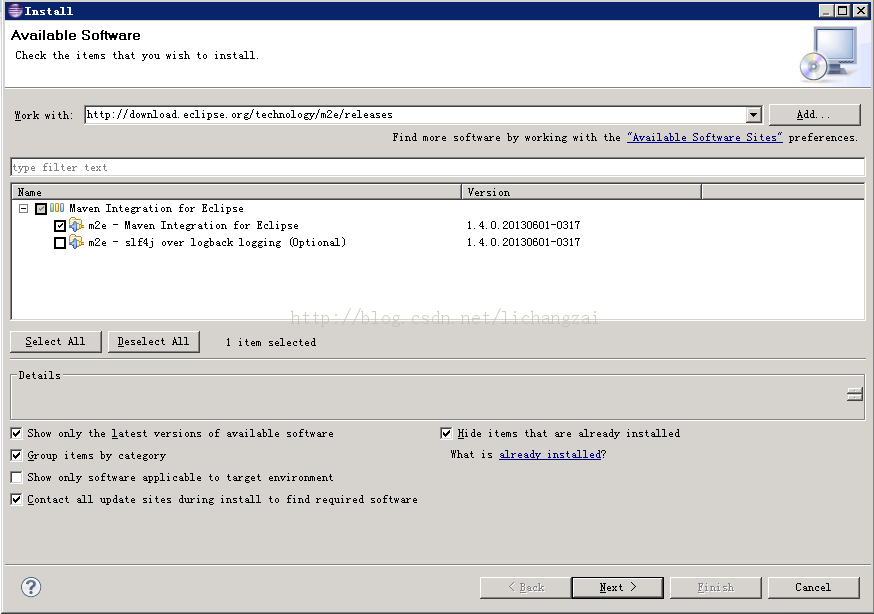

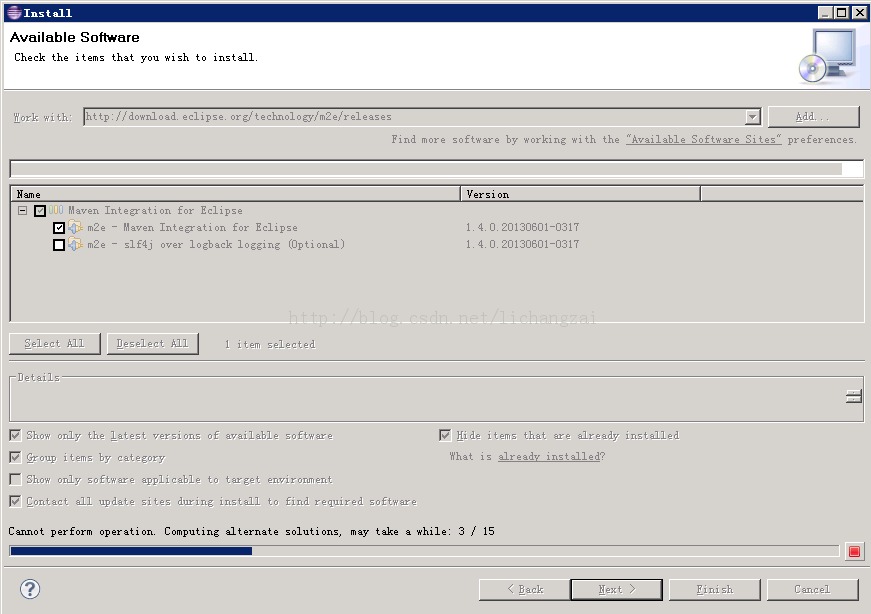

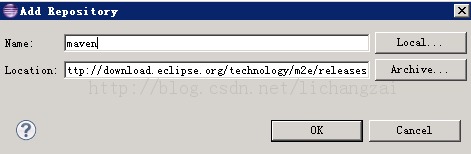

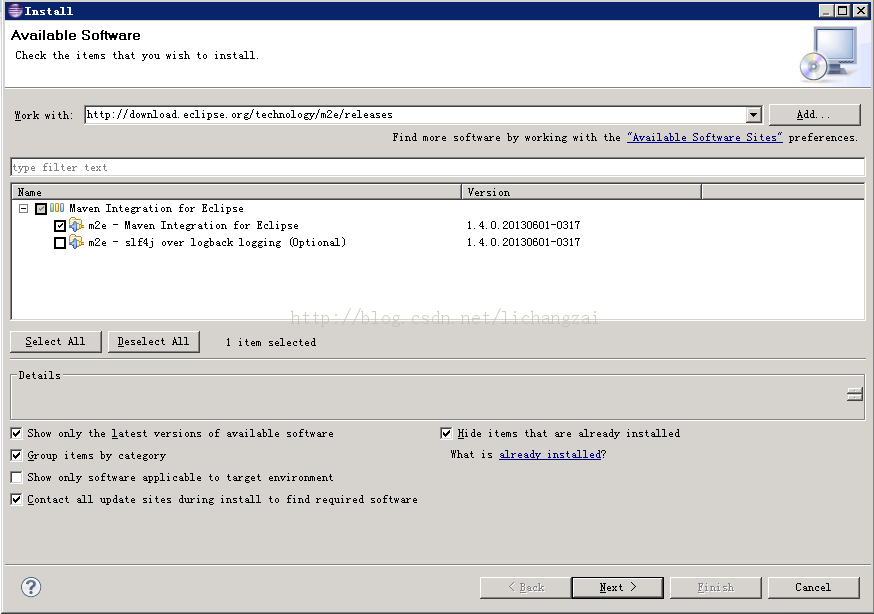

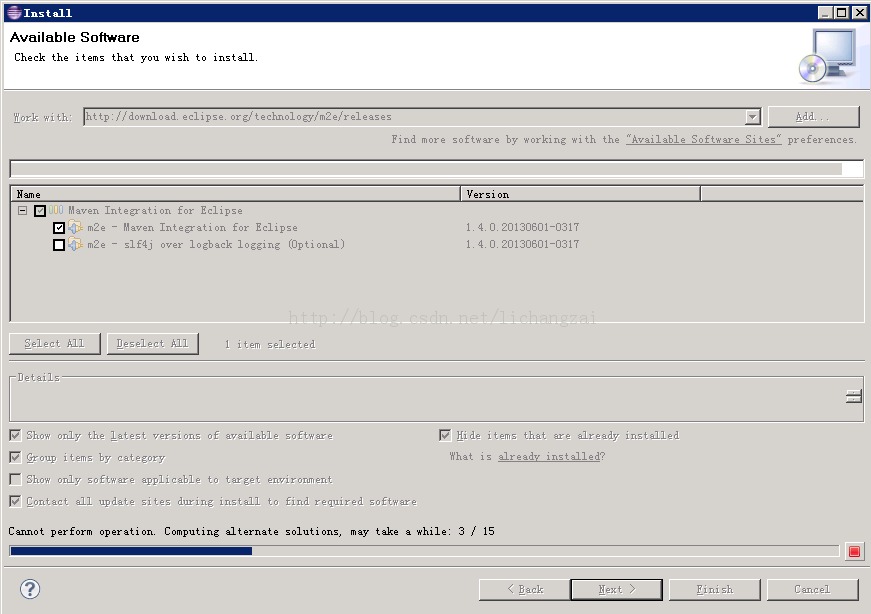

4) 安装 Eclipse 的 Maven 插件:MavenIntegration for Eclipse

进入:

复制下载地址 http://download.eclipse.org/technology/m2e/releases

到 eclipse 的help 菜单-> Install New Software 进行安装

安装大约需要半多小时间的时间。(由于网络不稳定,试了几次才装成功)

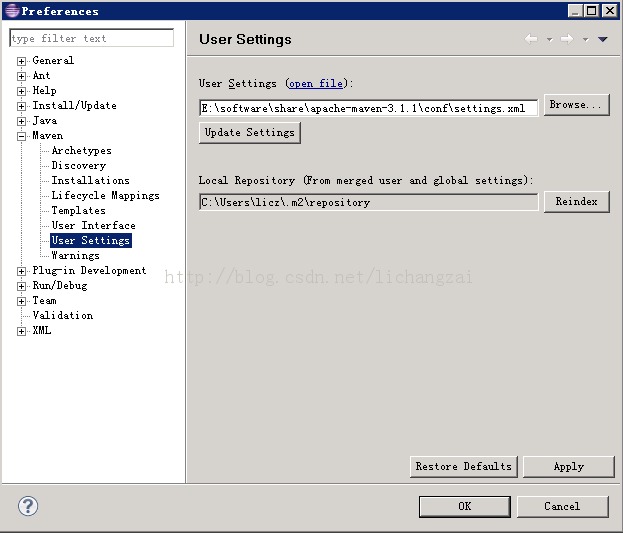

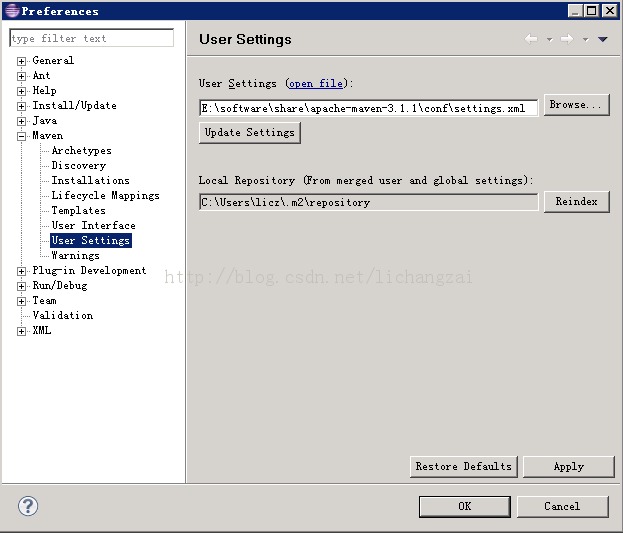

5) Maven的 Eclipse 插件配置

菜单windows -> Preference

Maven 的详细介绍:请点这里

Maven 的下载地址:请点这里

相关阅读:

在 Maven 仓库中添加 Oracle JDBC 驱动 http://www.linuxidc.com/Linux/2014-01/95933.htm

Maven 3.1.0 发布,项目构建工具 http://www.linuxidc.com/Linux/2013-07/87403.htm

Linux 安装 Maven http://www.linuxidc.com/Linux/2013-05/84489.htm

Maven3.0 配置和简单使用 http://www.linuxidc.com/Linux/2013-04/82939.htm

Ubuntu 下搭建 sun-jdk 和 Maven2 http://www.linuxidc.com/Linux/2012-12/76531.htm

Maven 使用入门 http://www.linuxidc.com/Linux/2012-11/74354.htm

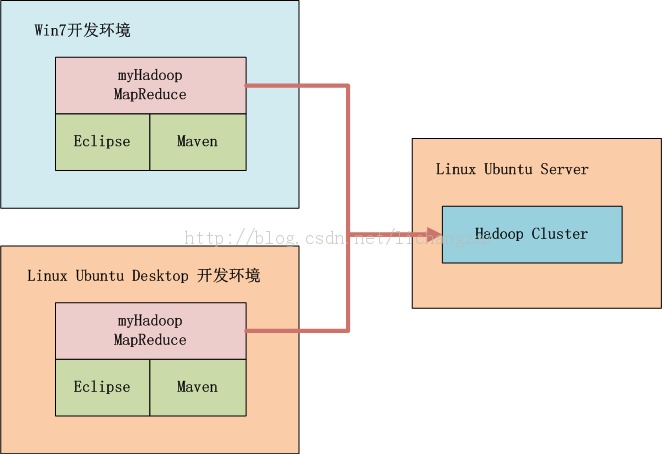

3. Hadoop开发环境介绍

如上图所示 , 我们可以选择在 win 中开发 , 也可以在 linux 中开发 , 本地启动 Hadoop 或者远程调用 Hadoop, 标配的工具都是 Maven 和Eclipse。

Hadoop集群系统环境:

Linux: Oracle linux Enterprise 5.9

Java: 1.6.0_30

Hadoop: hadoop-1.2.1,

三节点:

namenode: 10.1.32.91

datanode: 10.1.32.93

datanode: 10.1.32.95

4. 用 Maven 构建 Hadoop 环境

· 1.用 Maven 创建一个标准化的 Java 项目

· 2.导入项目到eclipse

· 3.增加 hadoop 依赖,修改pom.xml

· 4.下载依赖

· 5.从 Hadoop 集群环境下载 hadoop 配置文件

· 6.配置本地host

1). 用 Maven 创建一个标准化的 Java 项目

C:\Users\licz>mvn archetype:generate -DarchetypeGroupId=org.apache.maven.archetypes-DgroupId=org.conan.myhadoop.mr -DartifactId=myHadoop -DpackageName=org.conan.myhadoop.mr-Dversion=1.0-SNAPSHOT -DinteractiveMode=false

[INFO]Scanning for projects…

[INFO]

[INFO]————————————————————————

[INFO]Building Maven Stub Project (No POM) 1

[INFO] ————————————————————————

[INFO]

[INFO] >>> maven-archetype-plugin:2.2:generate (default-cli) @standalone-pom >>>

[INFO]

[INFO] <<< maven-archetype-plugin:2.2:generate (default-cli) @standalone-pom <<<

[INFO]

[INFO] — maven-archetype-plugin:2.2:generate (default-cli) @standalone-pom —

[INFO]Generating project in Batch mode

[INFO]No archetype defined. Using maven-archetype-quickstart (org.apache.maven.archetypes:maven-archetype-quickstart:1.0)

Downloading:http://repo.maven.apache.org/maven2/org/apache/maven/archetypes/maven-archetype-quickstart/1.0/maven-archetype-quickstart-1.0.jar

Downloaded:http://repo.maven.apache.org/maven2/org/apache/maven/archetypes/mave

n-archetype-quickstart/1.0/maven-archetype-quickstart-1.0.jar(5 KB at 4.5 KB/sec)

Downloading:http://repo.maven.apache.org/maven2/org/apache/maven/archetypes/maven-archetype-quickstart/1.0/maven-archetype-quickstart-1.0.pom

Downloaded:http://repo.maven.apache.org/maven2/org/apache/maven/archetypes/maven-archetype-quickstart/1.0/maven-archetype-quickstart-1.0.pom(703 B at 1.2 KB/sec)

[INFO]—————————————————————————-

[INFO]Using following parameters for creating project from Old (1.x)Archetype:maven-archetype-quickstart:1.0

[INFO]—————————————————————————-

[INFO]Parameter: groupId, Value: org.conan.myhadoop.mr

[INFO]Parameter: packageName, Value: org.conan.myhadoop.mr

[INFO]Parameter: package, Value: org.conan.myhadoop.mr

[INFO]Parameter: artifactId, Value: myHadoop

[INFO]Parameter: basedir, Value: C:\Users\licz

[INFO]Parameter: version, Value: 1.0-SNAPSHOT

[INFO]project created from Old (1.x) Archetype in dir: C:\Users\licz\myHadoop

[INFO]————————————————————————

[INFO]BUILD SUCCESS

[INFO]————————————————————————

[INFO]Total time: 36.633s

[INFO]Finished at: Thu Jan 16 10:31:44 CST 2014

[INFO]Final Memory: 11M/490M

[INFO]————————————————————————

进入项目,执行 mvn 命令

C:\Users\licz>cd myHadoop

C:\Users\licz\myHadoop>mvn clean install

……

[INFO]Installing C:\Users\licz\myHadoop\target\myHadoop-1.0-SNAPSHOT.jar to C:\Users\licz\.m2\repository\org\conan\myhadoop\mr\myHadoop\1.0-SNAPSHOT\myHadoop-1.0-SNAPSHOT.jar

[INFO]Installing C:\Users\licz\myHadoop\pom.xml to C:\Users\licz\.m2\repository\org\conan\myhadoop\mr\myHadoop\1.0-SNAPSHOT\myHadoop-1.0-SNAPSHOT.pom

[INFO]————————————————————————

[INFO]BUILD SUCCESS

[INFO]————————————————————————

[INFO]Total time: 2:06.911s

[INFO]Finished at: Thu Jan 16 14:52:00 CST 2014

[INFO]Final Memory: 9M/490M

[INFO]————————————————————————

Hadoop 的 MapReduce 环境是一个复杂的编程环境,所以我们要尽可能地简化构建 MapReduce 项目的过程。Maven 是一个很不错的自动化项目构建工具,通过 Maven 来帮助我们从复杂的环境配置中解脱出来,从而标准化开发过程。所以,写 MapReduce 之前,让我们先花点时间把刀磨快!!当然,除了 Maven 还有其他的选择 Gradle(推荐), Ivy 等

1. Maven介绍

Apache Maven,是一个 Java 的项目管理及自动构建工具,由 Apache 软件基金会所提供。基于项目对象模型(缩写:POM)概念,Maven 利用一个中央信息片断能管理一个项目的构建、报告和文档等步骤。曾是 Jakarta 项目的子项目,现为独立 Apache 项目。

maven 的开发者在他们开发网站上指出,maven 的目标是要使得项目的构建更加容易,它把编译、打包、测试、发布等开发过程中的不同环节有机的串联了起来,并产生一致的、高质量的项目信息,使得项目成员能够及时地得到反馈。maven 有效地支持了测试优先、持续集成,体现了鼓励沟通,及时反馈的软件开发理念。如果说 Ant 的复用是建立在”拷贝–粘贴”的基础上的,那么 Maven 通过插件的机制实现了项目构建逻辑的真正复用。

开发环境:

Win2008 64bit

Java-1.6.0_30

Maven-3.1.1

Hadoop-1.2.1

Eclipse Juno Service Release 2

2. Maven安装(win)

1) 下载 Maven:http://maven.apache.org/download.cgi

下载最新的 xxx-bin.zip 文件,在 win 上解压到下载最新的 xxx-bin.zip 文件:

2) 设置环境变量

在 win 上解压到E:\software\share\apache-maven-3.1.1

并把 maven/bin 目录设置在环境变量PATH:

设置添加 JAVA_HOME 系变量:

3) 运行测试

然后,打开命令行输入 mvn,会看到mvn 命令的运行效果

C:\Users\Administrator>MVN

[INFO]Scanning for projects…

[INFO]————————————————————————

[INFO]BUILD FAILURE

[INFO]————————————————————————

[INFO]Total time: 0.208s

[INFO]Finished at: Thu Dec 12 11:06:32 CST 2013

[INFO]Final Memory: 4M/490M

[INFO]————————————————————————

[ERROR]No goals have been specified for this build. You must specify a valid li

fecyclephase or a goal in the format <plugin-prefix>:<goal> or<plugin-group-id

>:<plugin-artifact-id>[:<plugin-version>]:<goal>.Available lifecycle phases are

:validate, initialize, generate-sources, process-sources, generate-resources, p

rocess-resources,compile, process-classes, generate-test-sources, process-test-

sources,generate-test-resources, process-test-resources, test-compile, process-

test-classes,test, prepare-package, package, pre-integration-test, integration-

test,post-integration-test, verify, install, deploy, pre-clean, clean, post-cle

an,pre-site, site, post-site, site-deploy. -> [Help 1]

[ERROR]

[ERROR]To see the full stack trace of the errors, re-run Maven with the -e swit

ch.

[ERROR]Re-run Maven using the -X switch to enable full debug logging.

[ERROR]

[ERROR]For more information about the errors and possible solutions, please rea

dthe following articles:

[ERROR][Help 1] http://cwiki.apache.org/confluence/display/MAVEN/NoGoalSpecifie

dException

4) 安装 Eclipse 的 Maven 插件:MavenIntegration for Eclipse

进入:

复制下载地址 http://download.eclipse.org/technology/m2e/releases

到 eclipse 的help 菜单-> Install New Software 进行安装

安装大约需要半多小时间的时间。(由于网络不稳定,试了几次才装成功)

5) Maven的 Eclipse 插件配置

菜单windows -> Preference

Maven 的详细介绍:请点这里

Maven 的下载地址:请点这里

相关阅读:

在 Maven 仓库中添加 Oracle JDBC 驱动 http://www.linuxidc.com/Linux/2014-01/95933.htm

Maven 3.1.0 发布,项目构建工具 http://www.linuxidc.com/Linux/2013-07/87403.htm

Linux 安装 Maven http://www.linuxidc.com/Linux/2013-05/84489.htm

Maven3.0 配置和简单使用 http://www.linuxidc.com/Linux/2013-04/82939.htm

Ubuntu 下搭建 sun-jdk 和 Maven2 http://www.linuxidc.com/Linux/2012-12/76531.htm

Maven 使用入门 http://www.linuxidc.com/Linux/2012-11/74354.htm

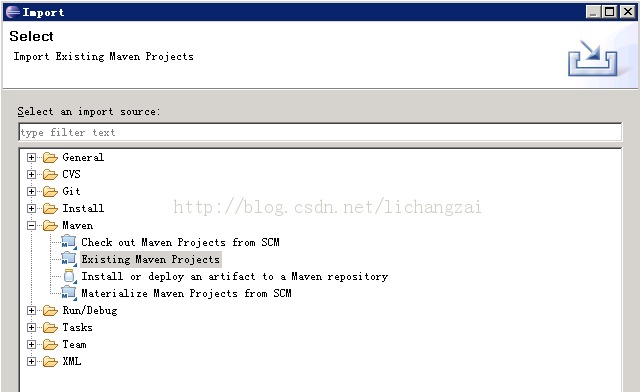

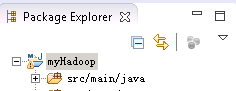

2). 导入项目到eclipse

我们创建好了一个基本的 maven 项目,然后导入到 eclipse 中。这里我们最好已安装好了 Maven 的插件。

步骤如下:

File->import->Maven->ExistingMaven Projects

点击 next

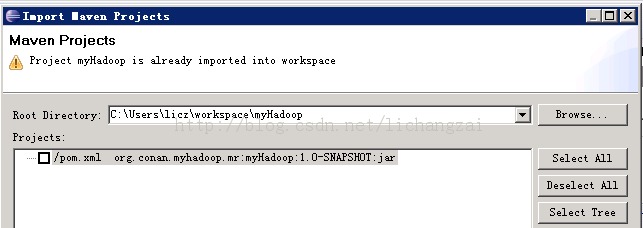

填上上面新建的 Maven 项目的目录

点击 Finish,完成导入,如下:

3). 增加 Hadoop 依赖

这里我使用 hadoop-1.2.1 版本,在 eclipse 里修改文件:pom.xml

//主要是添加红色的 hadoop 内容

<projectxmlns=“http://maven.apache.org/POM/4.0.0”xmlns:xsi=“http://www.w3.org/2001/XMLSchema-instance”

xsi:schemaLocation=“http://maven.apache.org/POM/4.0.0http://maven.apache.org/maven-v4_0_0.xsd”>

<modelVersion>4.0.0</modelVersion>

<groupId>org.conan.myhadoop.mr</groupId>

<artifactId>myHadoop</artifactId>

<packaging>jar</packaging>

<version>1.0-SNAPSHOT</version>

<name>myHadoop</name>

<url>http://maven.apache.org</url>

<dependencies>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-core</artifactId>

<version>1.2.1</version>

</dependency>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>3.8.1</version>

<scope>test</scope>

</dependency>

</dependencies>

</project>

4). 下载依赖

C:\Users\licz\workspace\myHadoop>mvn clean install

命令完成后会看到 Mamen Dependencies 下多出许多信赖包

项目的依赖程序,被自动加载的库路径下面

5). 从 Hadoop 集群环境下载 hadoop 配置文件

core-site.xml

hdfs-site.xml

mapred-site.xml

查看core-site.xml

<?xmlversion=“1.0”?>

<?xml-stylesheettype=“text/xsl”href=“configuration.xsl”?>

<!– Put site-specific property overrides in this file. –>

<configuration>

<property>

<name>fs.default.name</name>

<value>hdfs://nticket1:9000</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/app/hadoop/hadoop-1.2.1/tmp</value>

</property>

</configuration>

查看hdfs-site.xml

<?xmlversion=“1.0”?>

<?xml-stylesheettype=“text/xsl”href=“configuration.xsl”?>

<!– Put site-specific property overrides in this file. –>

<configuration>

<property>

<name>dfs.data.dir</name>

<value>/app/hadoop/hadoop-0.20.2/data</value>

</property>

<property>

<name>dfs.replication</name>

<value>2</value>

</property>

</configuration>

查看mapred-site.xml

<?xmlversion=“1.0”?>

<?xml-stylesheettype=“text/xsl”href=“configuration.xsl”?>

<!– Put site-specific property overrides in this file. –>

<configuration>

<property>

<name>mapred.job.tracker</name>

<value>nticket1:9001</value>

</property>

</configuration>

保存在 src/main/resources/hadoop 目录下面

删除原自动生成的文件:App.java和AppTest.java

6).配置本地 host,增加主节点nticket1 的域名指向

修改 hosts 文件:

c:/Windows/System32/drivers/etc/hosts

10.1.32.91 nticket1

5. MapReduce程序开发

编写一个简单的 MapReduce 程序,实现 wordcount 功能。

新一个 Java 文件:WordCount.java

package org.conan.myHadoop.mr;

import java.io.IOException;

import java.util.Iterator;

import java.util.StringTokenizer;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapred.FileInputFormat;

importorg.apache.hadoop.mapred.FileOutputFormat;

importorg.apache.hadoop.mapred.JobClient;

import org.apache.hadoop.mapred.JobConf;

import org.apache.hadoop.mapred.MapReduceBase;

import org.apache.hadoop.mapred.Mapper;

import org.apache.hadoop.mapred.OutputCollector;

import org.apache.hadoop.mapred.Reducer;

import org.apache.hadoop.mapred.Reporter;

import org.apache.hadoop.mapred.TextInputFormat;

import org.apache.hadoop.mapred.TextOutputFormat;

public class WordCount {

public static class WordCountMapper extends MapReduceBase implementsMapper<Object, Text, Text, IntWritable> {

private final static IntWritable one =new IntWritable(1);

private Textword =new Text();

@Override

public void map(Object key, Text value,OutputCollector<Text, IntWritable> output, Reporter reporter)throws IOException {

StringTokenizer itr = newStringTokenizer(value.toString());

while(itr.hasMoreTokens()) {

word.set(itr.nextToken());

output.collect(word,one);

}

}

}

public static class WordCountReducer extends MapReduceBase implementsReducer<Text, IntWritable, Text, IntWritable> {

private IntWritableresult =new IntWritable();

@Override

public void reduce(Text key, Iterator<IntWritable>values, OutputCollector<Text, IntWritable> output, Reporter reporter)throws IOException {

int sum = 0;

while (values.hasNext()){

sum +=values.next().get();

}

result.set(sum);

output.collect(key, result);

}

}

public static void main(String[] args)throws Exception {

String input = “hdfs://10.1.32.91:9000/user/licz/hdfs/o_t_account/test.txt”;

String output = “hdfs:// 10.1.32.91:9000/user/licz/hdfs/o_t_account/result”;

JobConf conf = new JobConf(WordCount.class);

conf.setJobName(“WordCount”);

conf.addResource(“classpath:/hadoop/core-site.xml”);

conf.addResource(“classpath:/hadoop/hdfs-site.xml”);

conf.addResource(“classpath:/hadoop/mapred-site.xml”);

conf.setOutputKeyClass(Text.class);

conf.setOutputValueClass(IntWritable.class);

conf.setMapperClass(WordCountMapper.class);

conf.setCombinerClass(WordCountReducer.class);

conf.setReducerClass(WordCountReducer.class);

conf.setInputFormat(TextInputFormat.class);

conf.setOutputFormat(TextOutputFormat.class);

FileInputFormat.setInputPaths(conf,new Path(input));

FileOutputFormat.setOutputPath(conf,new Path(output));

JobClient.runJob(conf);

System.exit(0);

}

}

首次运行控制台错误

2013-9-3019:25:02 org.apache.hadoop.util.NativeCodeLoader

警告: Unable toload native-hadoop library for your platform… using builtin-java classeswhere applicable

2013-9-3019:25:02 org.apache.hadoop.security.UserGroupInformation doAs

严重: PriviledgedActionExceptionas:Administrator cause:java.io.IOException: Failed to set permissions of path:\tmp\hadoop-Administrator\mapred\staging\Administrator1702422322\.staging to0700

Exception inthread “main” java.io.IOException: Failed to set permissions of path:\tmp\hadoop-Administrator\mapred\staging\Administrator1702422322\.staging to0700

atorg.apache.hadoop.fs.FileUtil.checkReturnValue(FileUtil.java:689)

atorg.apache.hadoop.fs.FileUtil.setPermission(FileUtil.java:662)

at org.apache.hadoop.fs.RawLocalFileSystem.setPermission(RawLocalFileSystem.java:509)

atorg.apache.hadoop.fs.RawLocalFileSystem.mkdirs(RawLocalFileSystem.java:344)

atorg.apache.hadoop.fs.FilterFileSystem.mkdirs(FilterFileSystem.java:189)

at org.apache.hadoop.mapreduce.JobSubmissionFiles.getStagingDir(JobSubmissionFiles.java:116)

atorg.apache.hadoop.mapred.JobClient$2.run(JobClient.java:856)

atorg.apache.hadoop.mapred.JobClient$2.run(JobClient.java:850)

atjava.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:396)

atorg.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1121)

atorg.apache.hadoop.mapred.JobClient.submitJobInternal(JobClient.java:850)

at org.apache.hadoop.mapred.JobClient.submitJob(JobClient.java:824)

atorg.apache.hadoop.mapred.JobClient.runJob(JobClient.java:1261)

atorg.conan.myhadoop.mr.WordCount.main(WordCount.java:78)

这个错误是 win 中开发特有的错误,文件权限���题,在 Linux 下可以正常运行。

解决方法是,修改 /hadoop-1.2.1/src/core/org/apache/hadoop/fs/FileUtil.java 文件

688-692行注释,然后重新编译源代码,重新打一个 hadoop.jar 的包。

685private static void checkReturnValue(boolean rv, File p,

686 FsPermissionpermission

687 )throws IOException {

688 /*if (!rv) {

689 throw new IOException(“Failed toset permissions of path: ” + p +

690 ” to ” +

691 String.format(“%04o”,permission.toShort()));

692 }*/

693 }

注:为了方便,我直接在网上下载的已经编译好的 hadoop-core-1.2.1.jar 包

我们还要替换 maven 中的 hadoop 类库。

~cp lib/hadoop-core-1.2.1.jarC:\Users\licz\.m2\repository\org\apache\hadoop\hadoop-core\1.2.1\hadoop-core-1.2.1.jar

启动Java APP,控制台输出:

2014-1-17 11:25:27 org.apache.hadoop.util.NativeCodeLoader <clinit>

警告: Unable to load native-hadoop library for yourplatform… using builtin-java classes where applicable

2014-1-17 11:25:27 org.apache.hadoop.mapred.JobClientcopyAndConfigureFiles

警告: Use GenericOptionsParser for parsing the arguments.Applications should implement Tool for the same.

2014-1-17 11:25:27 org.apache.hadoop.mapred.JobClientcopyAndConfigureFiles

警告: No job jar file set. User classes may not be found. See JobConf(Class) orJobConf#setJar(String).

2014-1-17 11:25:27 org.apache.hadoop.io.compress.snappy.LoadSnappy<clinit>

警告: Snappy native library not loaded

2014-1-17 11:25:27 org.apache.hadoop.mapred.FileInputFormat listStatus

信息: Total input paths to process : 1

2014-1-17 11:25:27 org.apache.hadoop.mapred.JobClient monitorAndPrintJob

信息: Running job: job_local2052617578_0001

2014-1-17 11:25:27 org.apache.hadoop.mapred.LocalJobRunner$Job run

信息: Waiting for map tasks

2014-1-17 11:25:27org.apache.hadoop.mapred.LocalJobRunner$Job$MapTaskRunnable run

信息: Starting task: attempt_local2052617578_0001_m_000000_0

2014-1-17 11:25:28 org.apache.hadoop.mapred.Task initialize

信息: UsingResourceCalculatorPlugin : null

2014-1-17 11:25:28 org.apache.hadoop.mapred.MapTask updateJobWithSplit

信息: Processing split: hdfs://10.1.32.91:9000/user/licz/hdfs/o_t_account/test.txt:0+46

2014-1-17 11:25:28 org.apache.hadoop.mapred.MapTask runOldMapper

信息: numReduceTasks: 1

2014-1-17 11:25:28 org.apache.hadoop.mapred.MapTask$MapOutputBuffer<init>

信息: io.sort.mb = 100

2014-1-17 11:25:28 org.apache.hadoop.mapred.MapTask$MapOutputBuffer<init>

信息: data buffer = 79691776/99614720

2014-1-17 11:25:28 org.apache.hadoop.mapred.MapTask$MapOutputBuffer<init>

信息: record buffer = 262144/327680

2014-1-17 11:25:28 org.apache.hadoop.mapred.MapTask$MapOutputBuffer flush

信息: Starting flush of map output

2014-1-17 11:25:28 org.apache.hadoop.mapred.MapTask$MapOutputBuffersortAndSpill

信息: Finished spill 0

2014-1-17 11:25:28 org.apache.hadoop.mapred.Task done

信息: Task:attempt_local2052617578_0001_m_000000_0 is done.And is in the process of commiting

2014-1-17 11:25:28 org.apache.hadoop.mapred.LocalJobRunner$JobstatusUpdate

信息: hdfs://10.1.32.91:9000/user/licz/hdfs/o_t_account/test.txt:0+46

2014-1-17 11:25:28 org.apache.hadoop.mapred.Task sendDone

信息: Task ‘attempt_local2052617578_0001_m_000000_0’ done.

2014-1-17 11:25:28org.apache.hadoop.mapred.LocalJobRunner$Job$MapTaskRunnable run

信息: Finishing task: attempt_local2052617578_0001_m_000000_0

2014-1-17 11:25:28 org.apache.hadoop.mapred.LocalJobRunner$Job run

信息: Map task executor complete.

2014-1-17 11:25:28 org.apache.hadoop.mapred.Task initialize

信息: UsingResourceCalculatorPlugin : null

2014-1-17 11:25:28 org.apache.hadoop.mapred.LocalJobRunner$JobstatusUpdate

信息:

2014-1-17 11:25:28 org.apache.hadoop.mapred.Merger$MergeQueue merge

信息: Merging 1 sorted segments

2014-1-17 11:25:28 org.apache.hadoop.mapred.Merger$MergeQueue merge

信息: Down to the last merge-pass, with 1 segments left oftotal size: 80 bytes

2014-1-17 11:25:28 org.apache.hadoop.mapred.LocalJobRunner$Job statusUpdate

信息:

2014-1-17 11:25:28 org.apache.hadoop.mapred.Task done

信息: Task:attempt_local2052617578_0001_r_000000_0 is done.And is in the process of commiting

2014-1-17 11:25:28 org.apache.hadoop.mapred.LocalJobRunner$JobstatusUpdate

信息:

2014-1-17 11:25:28 org.apache.hadoop.mapred.Task commit

信息: Task attempt_local2052617578_0001_r_000000_0 is allowedto commit now

2014-1-17 11:25:28 org.apache.hadoop.mapred.FileOutputCommitter commitTask

信息: Saved output of task’attempt_local2052617578_0001_r_000000_0′ to hdfs://10.1.32.91:9000/user/licz/hdfs/o_t_account/result

2014-1-17 11:25:28 org.apache.hadoop.mapred.LocalJobRunner$JobstatusUpdate

信息: reduce > reduce

2014-1-17 11:25:28 org.apache.hadoop.mapred.Task sendDone

信息: Task ‘attempt_local2052617578_0001_r_000000_0’ done.

2014-1-17 11:25:28 org.apache.hadoop.mapred.JobClient monitorAndPrintJob

信息: map 100% reduce100%

2014-1-17 11:25:28 org.apache.hadoop.mapred.JobClient monitorAndPrintJob

信息: Job complete: job_local2052617578_0001

2014-1-17 11:25:28 org.apache.hadoop.mapred.Counters log

信息: Counters: 20

2014-1-17 11:25:28 org.apache.hadoop.mapred.Counters log

信息: File InputFormat Counters

2014-1-17 11:25:28 org.apache.hadoop.mapred.Counters log

信息: Bytes Read=46

2014-1-17 11:25:28 org.apache.hadoop.mapred.Counters log

信息: File OutputFormat Counters

2014-1-17 11:25:28 org.apache.hadoop.mapred.Counters log

信息: BytesWritten=50

2014-1-17 11:25:28 org.apache.hadoop.mapred.Counters log

信息: FileSystemCounters

2014-1-17 11:25:28 org.apache.hadoop.mapred.Counters log

信息: FILE_BYTES_READ=430

2014-1-17 11:25:28 org.apache.hadoop.mapred.Counters log

信息: HDFS_BYTES_READ=92

2014-1-17 11:25:28 org.apache.hadoop.mapred.Counters log

信息: FILE_BYTES_WRITTEN=137344

2014-1-17 11:25:28 org.apache.hadoop.mapred.Counters log

信息: HDFS_BYTES_WRITTEN=50

2014-1-17 11:25:28 org.apache.hadoop.mapred.Counters log

信息: Map-ReduceFramework

2014-1-17 11:25:28 org.apache.hadoop.mapred.Counters log

信息: Map output materializedbytes=84

2014-1-17 11:25:28 org.apache.hadoop.mapred.Counters log

信息: Map inputrecords=5

2014-1-17 11:25:28 org.apache.hadoop.mapred.Counters log

信息: Reduceshuffle bytes=0

2014-1-17 11:25:28 org.apache.hadoop.mapred.Counters log

信息: Spilled Records=14

2014-1-17 11:25:28 org.apache.hadoop.mapred.Counters log

信息: Map outputbytes=81

2014-1-17 11:25:28 org.apache.hadoop.mapred.Counters log

信息: Totalcommitted heap usage (bytes)=1029046272

2014-1-17 11:25:28 org.apache.hadoop.mapred.Counters log

信息: Map inputbytes=46

2014-1-17 11:25:28 org.apache.hadoop.mapred.Counters log

信息: Combine inputrecords=9

2014-1-17 11:25:28 org.apache.hadoop.mapred.Counters log

信息: SPLIT_RAW_BYTES=111

2014-1-17 11:25:28 org.apache.hadoop.mapred.Counters log

信息: Reduce inputrecords=7

2014-1-17 11:25:28 org.apache.hadoop.mapred.Counters log

信息: Reduce inputgroups=7

2014-1-17 11:25:28 org.apache.hadoop.mapred.Counters log

信息: Combineoutput records=7

2014-1-17 11:25:28 org.apache.hadoop.mapred.Counters log

信息: Reduce outputrecords=7

2014-1-17 11:25:28 org.apache.hadoop.mapred.Counters log

信息: Map outputrecords=9

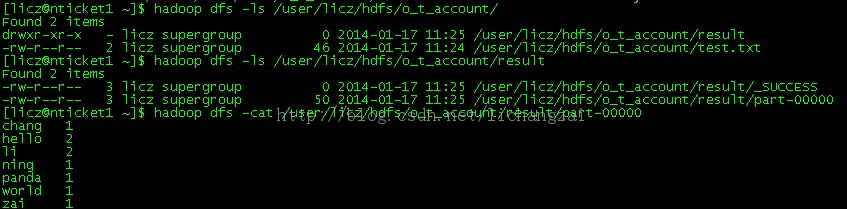

成功运行了 wordcount 程序,通过命令我们查看输出结果

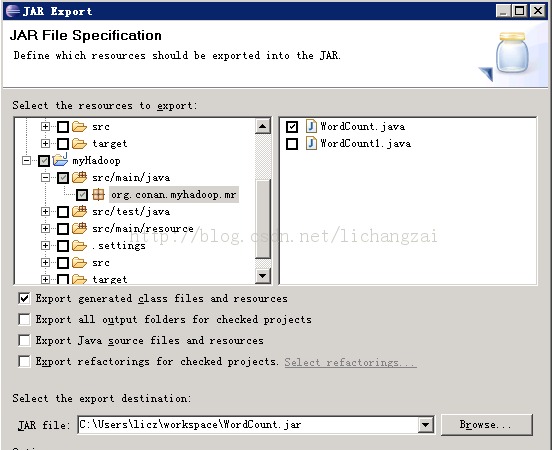

或是在 eclipse 导出 jar 包,上传到服务器运行

File-> export -> java -> JAR file

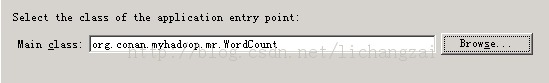

下一步

如果不选择 Main class,要在运行时指定main class, 如下

[licz@nticket1~]$ hadoop jar /app/hadoop/WordCount.jarWordCount

6. 遇到的问题

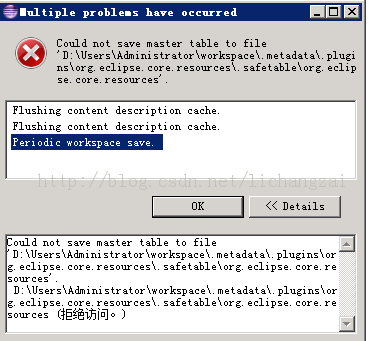

问题1:

可能系统用户的权限问,换了台服务器在新建的用户下安装eclipse,再建项目可正常运行。

问题2

Description Resource Path Location Type

Buildpath specifies execution environment J2SE-1.5. There are no JREs installed inthe workspace that are strictly compatible with this environment. myHadoop Buildpath JRE System Library Problem

解决办法:

在项目的 JRE System Library[JavaSE-1.5] 右键-> Properties

在 Evecution environment 选择JavaSE-1.6(jre6S)

点确定后,项目名称上的感叹号警告就会消失

相关阅读:

Ubuntu 13.04 上搭建 Hadoop 环境 http://www.linuxidc.com/Linux/2013-06/86106.htm

Ubuntu 12.10 +Hadoop 1.2.1 版本集群配置 http://www.linuxidc.com/Linux/2013-09/90600.htm

Ubuntu 上搭建 Hadoop 环境(单机模式 + 伪分布模式)http://www.linuxidc.com/Linux/2013-01/77681.htm

Ubuntu 下 Hadoop 环境的配置 http://www.linuxidc.com/Linux/2012-11/74539.htm

单机版搭建 Hadoop 环境图文教程详解 http://www.linuxidc.com/Linux/2012-02/53927.htm

搭建 Hadoop 环境(在 Winodws 环境下用虚拟机虚拟两个 Ubuntu 系统进行搭建)http://www.linuxidc.com/Linux/2011-12/48894.htm

更多 Hadoop 相关信息见Hadoop 专题页面 http://www.linuxidc.com/topicnews.aspx?tid=13